Introduction

This article try to explain the technical reasons of the Oracle Database Appliance success, a well-known appliance with whom Oracle targets small and medium businesses, or specific departments of big companies looking for privacy and isolation from the rest of the IT. Nowadays this small and relatively cheap appliance (around 65’000$ price list) has evolved a lot, the storage has reached an important capacity 128TB raw expansible to 256TB, and the two X5-2 servers are the same used on the database node of the Exadata machine. Many customers, while defining the new database architecture evaluate the pros and cons of acquiring an ODA compared to the smallest Exadata configuration (one eight of a Rack). If the customer is not looking for a system with extreme performance and horizontal scalability beyond the two X5-2 servers, the Oracle Database Appliance is frequently the retained option.

Some of the ODA major features are:

- High Availability: no single point of failure on all hardware and software components.

- Performance: each server is equipped with 2×18-core Intel Xeon and 256GB of RAM extensible up to 768GB, cluster communication over InfiniBand. The shared storage offers a multi-tiers configuration with HDDs at 7.2K rpm and two type of SSDs for frequently accessed data and for database redo logs.

- Flexibility & Scalability: running RAC, RAC One node and Single Instance databases.

- Virtualized configuration: designed for offering Solution in-a-box, with high available virtual machines.

- Optimized licensing model: pay-as-you-grow model activating a crescendo number of CPU-cores on demand, with the Bare Metal configuration; or capping the resources combining Oracle VM with the Hard Partitioning setup.

- Time-to-market: no-matter if the ODA has to be installed bare metal or virtualized, this is a standardized and automated process generally completed in one or two day of work.

- Price: the ODA is very competitive when comparing the cost to an equivalent commodity architecture; which in addition, must be engineered, integrated and maintained by the customer.

At the time of the writing of this article, the latest hardware model is ODA X5-2 and 12.1.2.6.0 is the software version. This HW and SW combination offers unique features, few of them not even available on the Exadata machine, like the possibility to host databases and applications in one single box, or the possibility to rapidly and space efficiently clone an 11gR2 and 12c database using ACFS Snapshot.

ODA HW & SW Architecture

Oracle Database Appliance is composed by two X5-2 servers and a shared storage shelf, which optionally can be doubled. Each Server disposes of: two 18-core Intel Xeon E5-2699 v3; 256GB RAM (optionally upgradable to 768GB) and two 600GB 10k rpm internal disks in RAID 1 for OS and software binaries.

This appliance is equipped with redundant networking connectivity up to 10Gb, redundant SAS HBAs and Storage I/O modules, redundant InfiniBand interconnect for cluster communication enabling 40 Gb/second server-to-server communication.

The software components are all part of Oracle “Red Stack” with Oracle Linux 6 UEK or OVM 3, Grid Infrastructure 12c, Oracle RDBMS 12c & 11gR2 and Oracle Appliance Manager.

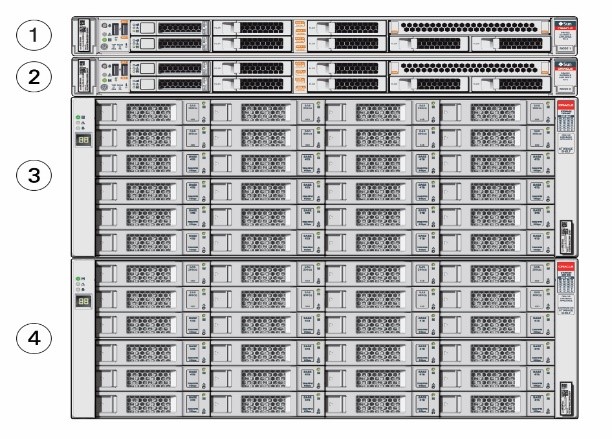

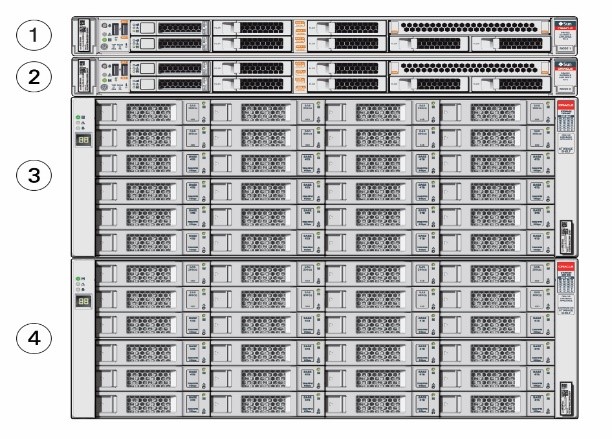

ODA Front view

Components number 1 & 2 are the X5-2 Servers. Components 3 & 4 are the Storage and the optionally Storage extension.

ODA Rear view

Highlight of the multiple redundant connections, including InfiniBand for Oracle Clusterware, ASM and RAC communications. No single point of HW or SW failure.

Storage Organization

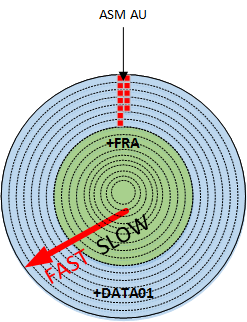

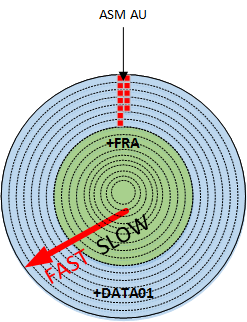

With 16x8TB SAS HDDs a total raw space of 128TB is available on each storage self (64TB in configuration ASM double-mirrored and 42.7TB with ASM triple-mirrored). To offer better I/O performance without exploding the price, Oracle has implemented the following SSD devices: 4x400GB ASM double-mirrored, for frequently accessed data, and 4x200GB ASM triple-mirrored, for database redo logs.

As shown on the picture aside, each rotating disk has two slices, the external, and more performant partition assigned to the +DATA ASM disk group, and the internal one allocated to +RECO ASM disk group.

This storage optimization allows the ODA to achieve competitive I/O performance. In a production-like environment, using the three type of disks, as per ODA Database template odb-24 (https://docs.oracle.com/cd/E22693_01/doc.12/e55580/sizing.htm), Trivadis has measured 12k I/O per second and a throughput of 2300 MB/s with an average latency of 10ms. As per Oracle documentation, the maximum number of I/O per second of the rotating disks, with a single storage shelf is 3300; but this value increases significantly relocating the hottest data files to +FLASH disk group created on SSD devices.

ACFS becomes the default database storage of ODA

Starting from the ODA software version 12.1.0.2, any fresh installation enforces ASM Cluster File System (ACFS) as lonely type of database storage support, restricting the supported database versions to 11.2.0.4 and greater. In case of ODA upgrade from previous release, all pre-existing databases are not automatically migrated to ACFS, but Oracle provides a tool called acfs_mig.pl for executing this mandatory step on all Non-CDB databases of version >= 11.2.0.4.

Oracle has decided to promote ACFS as default database storage on ODA environment for the following reasons:

- ACFS provides almost equivalent performance than Oracle ASM disk groups.

- Additional functionalities on industry standard POSIX file system.

- Database snapshot copy of PDBs, and NON-CDB of version 11.2.0.4 or greater.

- Advanced functionality for general-purpose files such as replication, tagging, encryption, security, and auditing.

Database created on ACFS follows the same Oracle Managed Files (OMF) standard used by ASM.

As in the past, the database provisioning requires the utilization of the command line interface oakcli and the selection of a database template, which defines several characteristics including the amount of space to allocate on each file system. Container and Non-Container databases can coexist on the same Oracle Database Appliance.

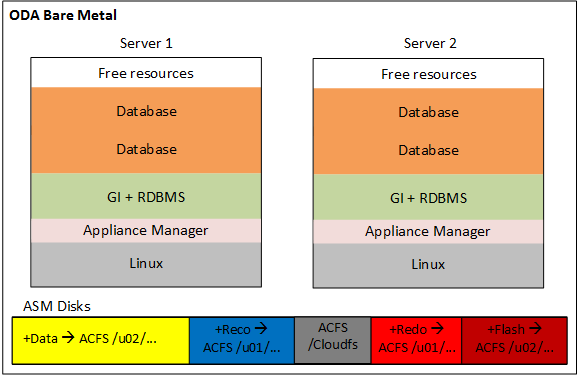

The ACFS file systems are created during the database provisioning process on top of the ASM disk groups +DATA, +RECO, +REDO, and optionally +FLASH. The file systems have two possible setups, depending on the database type Container or Non-Container.

- Container database: for each CDB the ODA database-provisioning job creates dedicated ACFS file systems with the following characteristics:

| Disk Characteristics |

ASM Disk group |

ACFS Mount Point |

| SAS Disk external partition |

+DATA |

/u02/app/oracle/oradata/datc<db_unique_name> |

| SAS Disk internal partition |

+RECO |

/u01/app/oracle/fast_recovery_area/rcoc<db_unique_name> |

| SSD Triple-mirrored |

+REDO |

/u01/app/oracle/oradata/rdoc<db_unique_name> |

| SSD Double-mirrored |

+FLASH |

(*) /u02/app/oracle/oradata/flashdata |

- Non-Container database: in case of Non-CDB the ODA database-provisioning job creates or resizes the following shared ACFS file systems:

| Disk Characteristics |

ASM Disk group |

ACFS Mount Point |

| SAS Disk external partition |

+DATA |

/u02/app/oracle/oradata/datastore |

| SAS Disk internal partition |

+RECO |

/u01/app/oracle/fast_recovery_area/datastore |

| SSD Triple-mirrored |

+REDO |

/u01/app/oracle/oradata/datastore |

| SSD Double-mirrored |

+FLASH |

(*) /u02/app/oracle/oradata/flashdata |

(*) Optionally used by the databases as Smart Flash Cache (extension of the SGA buffer cache), or allocated to store the hottest data files leveraging the I/O performance of the SSD disks.

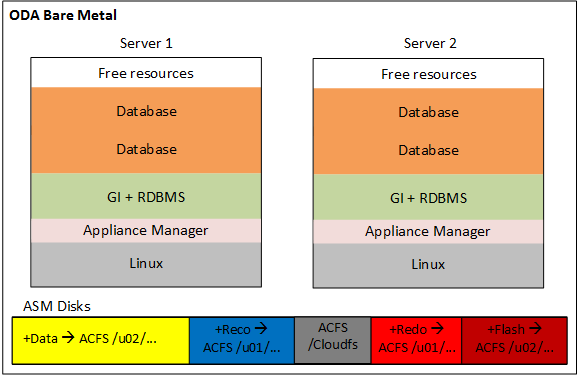

Oracle Database Appliance Bare Metal

The bare metal configuration has been available since version one of the appliance, and nowadays it remains the default option proposed by Oracle, which pre-install the OS Linux on any new system. Very simple and intuitive to install thanks to the pre-built bundle software, which automates most of the steps. At the end of the installation, the architecture is very similar to any other two node RAC setup based on commodity hardware; but even from an operation point of view there are great advantages, because the Oracle Appliance Manager framework simplifies and accelerates the execution of almost any system and database administrator task.

Here below is depicted the ODA architecture when the bare metal configuration is in use:

Oracle Database Appliance Virtualized

When the ODA is deployed with the virtualization, both servers run Oracle VM Server, also called Dom0. Each Dom0 hosts in a local dedicated repository the ODA Base (or Dom Base), a privileged virtual machine where it is installed the Appliance Manager, Grid Infrastructure and RDBMS binaries. The ODA Base takes advantage of the Xen PCI Pass-through technology to provide direct access to the ODA shared disks presented and managed by ASM. This configuration reduces the VM flexibility; in fact, no VM migration is allowed for the two ODA Base, but it guarantees almost no I/O penalty in term of performance. With the Dom Base setup, the basic installation is completed and it is possible to start provisioning databases using Oracle Appliance Manager.

At the same time, the administrator can create new-shared repositories hosted on ACFS and NFS exported to the hypervisor for hosting the application virtual machines. Those application virtual machines are also identified with the name of Domain U. The Domain U and the templates can be stored on a local or shared Oracle VM Server repository, but to enable the functionality to migrate between the two Oracle VM Servers a shared repository on the ACFS file system should be used.

Even when the virtualization is in use, Oracle Appliance Manager is the only framework for system and database administration tasks like repository creation, import of template, deployment of virtual machine, network configuration, database provisioning and so on, relieving the administrator from all complexity.

The implementation of the Solution-in-a-box guarantees the maximum Return on Investment of the ODA; in fact, while restricting the virtual CPUs to license on the Dom Base it allows relocating spare resources to the application virtual machines as showed on the picture below.

ODA compared to Exadata Machine and Commodity Hardware

As described on the previous sections, Oracle Database Appliance offers unique features such as pay-as-you-grow, solution-in-a-box and so on, which can heavily influence the decision for a new database architecture. The aim of the table below is to list the main architecture characteristics to evaluate while defining a new database infrastructure, comparing the result between Oracle Database Appliance, Exadata Machine and a Commodity Architecture based on Intel Linux engineered to run RAC databases.

As shown by the different scores of the three architectures, each solution comes with points of strength and weakness; about the Oracle Database Appliance, it is evident that due to its characteristics, the smallest Oracle Engineered System remains a great option for small, medium database environments.

Conclusion

I hope this article keep the initial promise to explain the technical reasons of the Oracle Database Appliance success, and it has highlighted the great work done by Oracle, engineering this solution on the edge of the technology keeping the price under control.

One last summary of what in my opinion are the major benefits offered by the ODA:

- Time-to-market: Thanks to automated processes and pre-build software images, the deployment phase is extremely rapid.

- Simplicity: The use of standard software components, combined to the appliance orchestrator Oracle Appliance Manager makes the ODA very simple to operate.

- Standardization & Automation: The Appliance Manager encapsulates and automatizes all repeatable and error-prone tasks like provisioning, decommissioning, patching and so on.

- Vendor certified platform: Oracle validates and certifies the compatibility among all HW & SW components.

- Evolution: Over the time, the ODA benefits of specific bug fixing and software evolution (introduced by Oracle though the quarterly patch sets); keeping the system on the edge for longer time when compared to a commodity architecture.